The Collaboration Software Brands Dominating AI Search and Why

Third-party review platforms dominate AI citations in collaboration software. Lantern's April 2026 data shows which content formats and intent categories are driving visibility.

Ask any AI engines to recommend a project management tool for a remote team, and it will produce an answer. However, the most important question collaboration software brands should be asking is, “Does our name appear in that answer, and where is it coming from?”

To answer that question, Lantern tracked 200 million prompt responses directly from ChatGPT, Gemini, Perplexity, Claude, and AI Overviews, mapping citation patterns, domain authority, and brand share of voice across the collaboration software category in April 2026.

These findings break down specific data that marketing and content teams at collaboration software companies can act on.

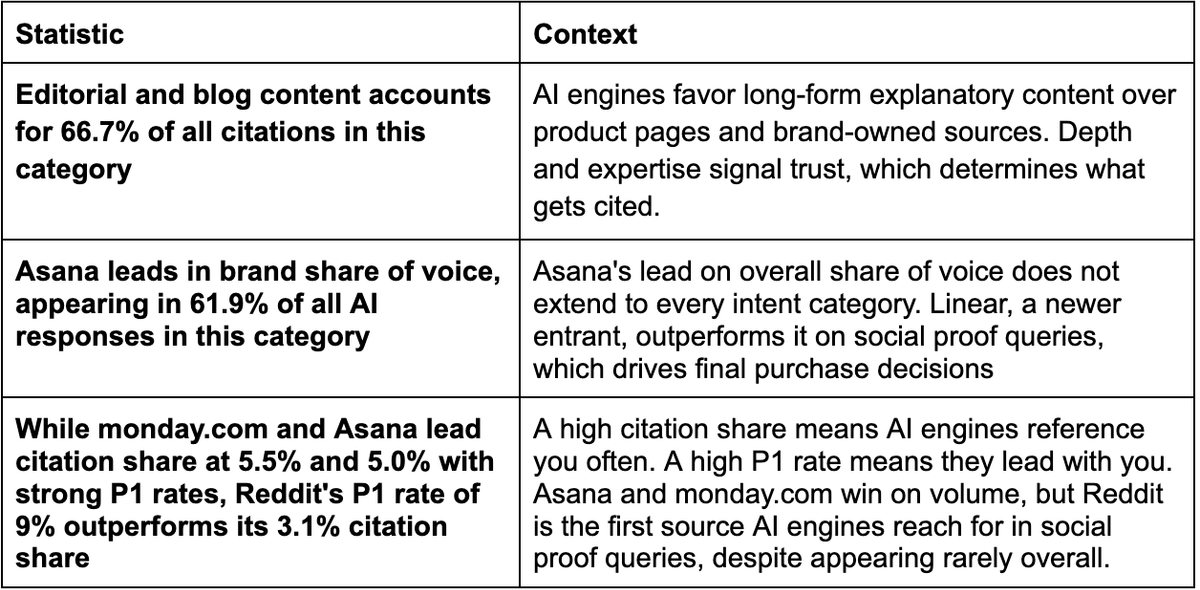

Summary: 2026 Collaboration Software AI Search Stats Every Marketer Should Know

Here’s a quick snapshot about how AI engines are citing brands in this category:

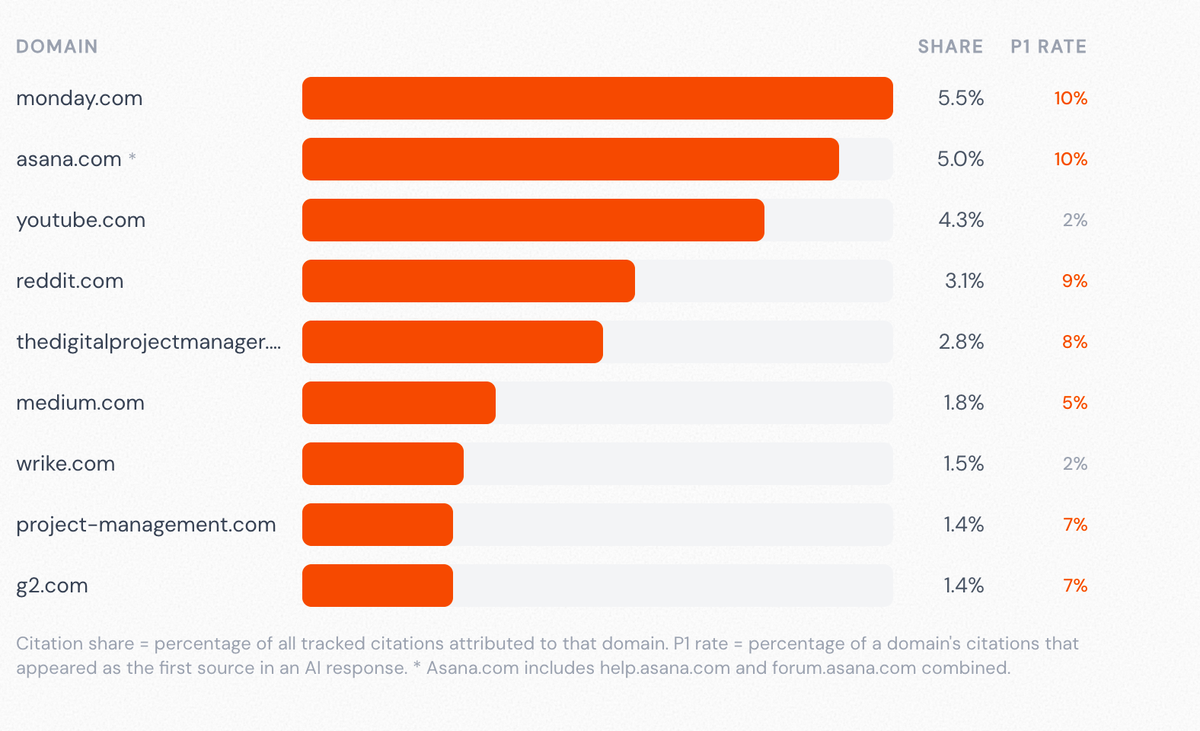

Which Domains Win AI Citations in Collaboration Software?

Monday.com and Asana sit at the top of the domain citation table at 5.5% and 5.0% respectively, both carrying Position 1 rates of 10%. But their dominance is not a straightforward reward for brand investment or content volume.

Source: Lantern AI Search Content Visibility Report on Collaboration Software, April 2026

Look closer, and it reflects the density of high-quality third-party editorial, comparison content, and practitioner reviews that reference them across the web. AI engines are not reading their product pages; they are citing the independent sources that have built up around them. While the domain gets the credit, it's clear that the brand does not always control the narrative behind it.

That context makes the Reddit finding even more instructive. Despite a citation share of just 3.1%, Reddit has a high Position 1 rate of 9%, which suggests that when AI engines field social-proof queries about collaboration tools, they reach for community content first.

For marketing teams, this means that a brand’s G2 profile, Capterra listing, and Reddit threads are primary AI search assets. They serve as the first point of contact between the brand and an AI engine that fields a buyer's query.

Why Editorial Content Dominates AI Search

Based on the data, when a user asks an AI engine which project management tool is right for their team, the AI engine sources its response from what it considers credible. And in this case, AI engines favor editorial content and blogs.

A well-written editorial piece on a specific topic, such as "how to manage engineering sprints across time zones" or "what to look for in a project management tool for creative teams," is evidence that someone has thought carefully about a problem and produced a substantive answer.

That is the signal AI engines try to identify when deciding what to cite.

On the other hand, brand websites account for just 3.3% of all citations, a clear sign that product pages rarely get cited even when they contain accurate and relevant information. They are optimized to convert, and the information is mostly framed around the brand's interests rather than the reader's question.

What this means in practice is that the editorial content published on third-party sites and in independent reviews is doing more work in AI search than most marketing teams realize.

Why Marketing Teams Need To Invest in Social Proof

The report shows that Asana leads eight of the ten intent categories. It also holds nearly half of all purchase-intent citations in the category. By most measures, it is the dominant brand in AI search for collaboration software.

Then there is the social proof finding. Linear, a newer entrant, leads social proof queries at 31.6%, ahead of Asana, monday.com, and Jira.

Social proof queries are the ones buyers submit when they have narrowed their list and want validation. "Is Linear actually good?" or "What do engineers think of Linear?" These are late-stage evaluation queries when a buyer is about to make a decision. And on those queries, Linear is outperforming tools with three to four times its overall visibility.

It’s clear that Linear has built a strong reputation in places where AI engines weigh heavily for social proof queries, such as Reddit discussions and developer forums.

An AI engine fielding a social-proof query is seeking evidence of real-world usage from credible practitioners.

What Next for Collaboration Software Brands?

Collaboration software teams have the opportunity to tailor their content to be more discoverable by AI search engines. Here are some content priorities based on the findings:

- Treat external profiles as primary content assets: Reddit threads, third-party editorial content, and G2 reviews are a great citation source. A review profile with detailed, outcome-specific content describing real use cases is a powerful AI search asset. Updating existing profiles will often outperform building new owned content from scratch.

- Invest in comparison content built for evaluation-mode buyers: Posts such as "Best project management tools for engineering teams," or "x vs y for marketing agencies, are real queries with targeted answers. Ensure your content is well-structured and written for the query so it is citable.