The Content Format That Wins AI Citations

Listicles drive 35.6% of all AI citations more than any other format. Lantern's 200M+ citation dataset reveals why, and what your content team should do now.

Sometime in the last five years, a consensus formed in SEO circles that listicles were over.

The argument was reasonable on its face. Google's algorithm updates were rewarding depth, expertise, and original research. Thin "10 best X" posts were getting penalized. Content strategists advised their teams to retire the format in favor of long-form guides, pillar pages, and thought leadership. The listicle, the argument went, was a relic of a low-quality content era that search engines had moved past.

Most marketing teams restructured their editorial calendars. They invested in comprehensive guides and original research. They stopped writing listicles.

They were optimizing for the wrong engine.

What Lantern's Citation Data Actually Shows

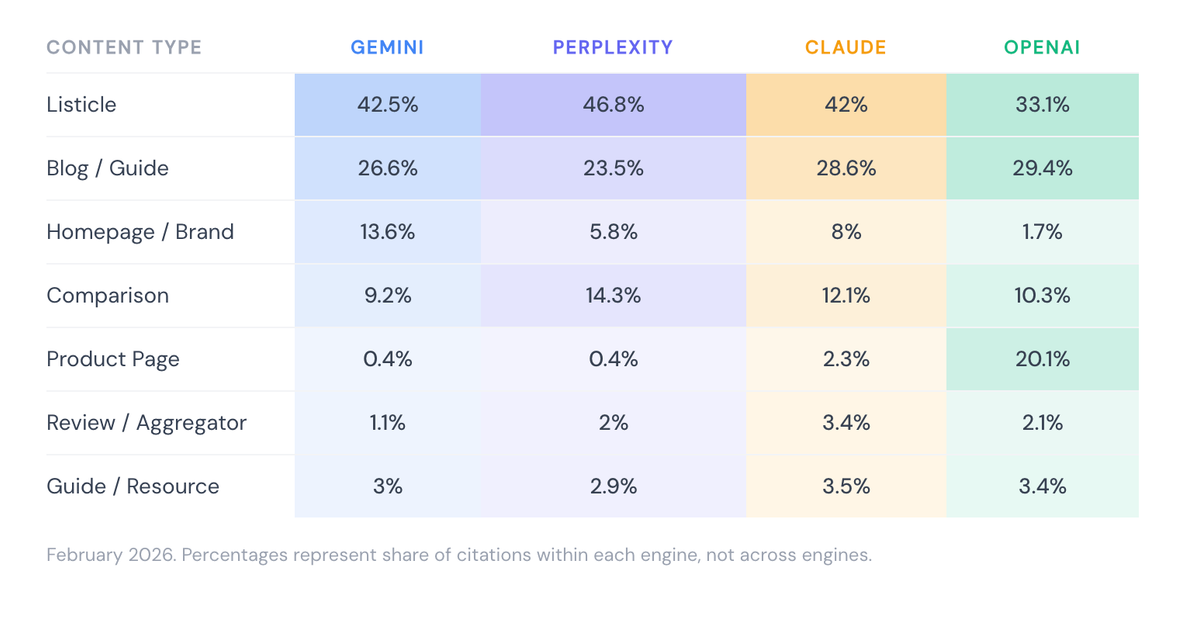

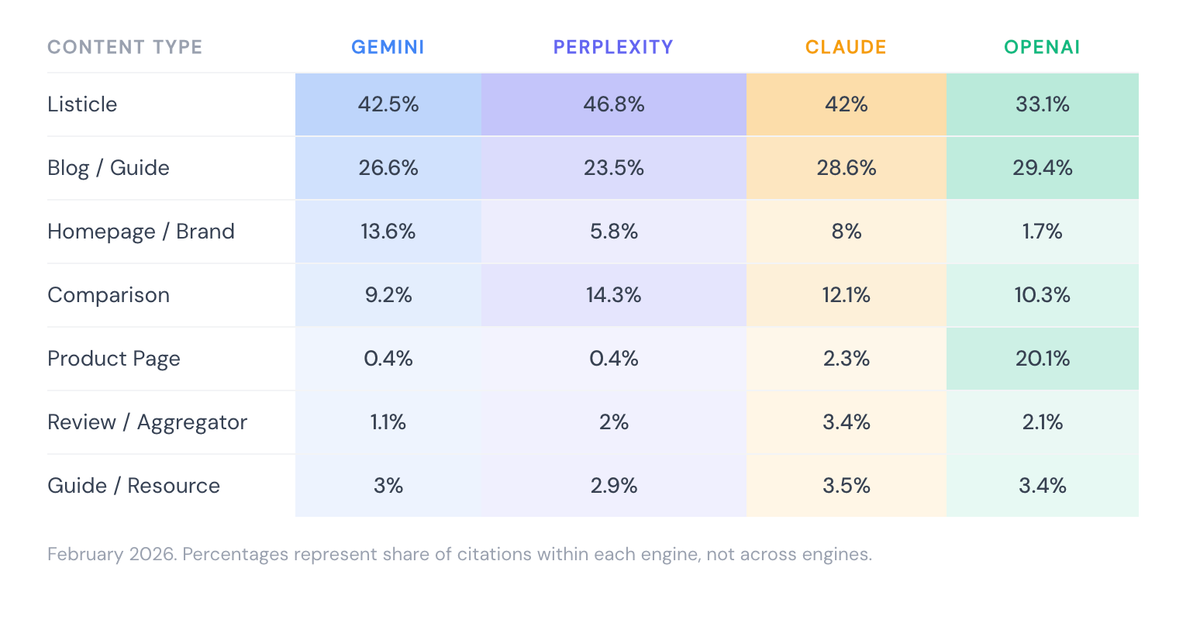

Lantern's February 2026 AI Citation Content Visibility Report analyzed over 200 million citations collected directly from ChatGPT, Perplexity, Gemini, and Claude. Across all four engines, one content format dominates citations more than any other.

More than one in three AI citations across all query types, all industries, all four major AI engines points to a listicle.

Listicles and blog posts combined account for more than half of all AI citations. But the gap between first and second place is significant: listicles outperform the next closest format by more than 13 percentage points.

This is not a marginal finding. It is the dominant pattern in how AI engines source their answers.

Why AI Engines Favor List-Structured Content

Understanding why listicles win requires understanding how AI engines construct answers.

When a user submits a query, the AI engine is not retrieving a page and summarizing it. It is synthesizing an answer from multiple sources, extracting the most relevant and citable fragments from each. The structural question it is implicitly asking of every piece of content it considers is: can I extract a clean, specific, attributable answer from this?

List-structured content answers that question more reliably than almost any other format. Each item in a listicle is a discrete, labeled unit of information. It has a clear boundary, a specific claim, and a logical relationship to the overall topic. For an AI engine trying to construct a response to "what are the best tools for X" or "what should marketers know about Y," a well-constructed listicle is essentially pre-formatted for citation.

Long-form prose guides, by contrast, contain the same information distributed across paragraphs, subordinate clauses, and hedged arguments. The information may be richer and more nuanced, but it is harder to extract cleanly. AI engines can pull from it, but they have to do more work to find the citable fragment.

The irony is that the structural properties that made listicles look thin to Google's quality assessors discreteness, brevity per point, clear enumeration are precisely the properties that make them legible to AI engines.

The Breakdown by AI Engine

The preference for listicles is consistent across engines, but the degree varies in ways that have strategic implications for marketing teams.

Source: Lantern AI Citation Content Visibility Report, February 2026

Several patterns are worth noting here.

Perplexity has the highest listicle citation rate at 46.8%. Nearly half of all citations on Perplexity point to list-structured content. For brands targeting Perplexity which has a disproportionately high share of technology and B2B professional users, listicle investment has a particularly direct return.

ChatGPT has the lowest listicle rate at 33.1% but the highest product page rate at 20.1%. This is a significant divergence from every other engine. ChatGPT is substantially more likely than Perplexity, Gemini, or Claude to cite a product page directly. For brands running e-commerce or SaaS businesses where product pages are central, ChatGPT represents a different optimization opportunity.

Comparison content performs consistently across all four engines at 9–14%. Given that comparison queries "X vs Y," "best tools for Z," "alternatives to X" are among the highest purchase-intent queries in B2B search, this citation rate punches above its weight in revenue terms. A format that captures 9–14% of citations but represents a much smaller share of published content is systematically underproduced.

What "Listicle" Actually Means in This Context

The finding that listicles dominate AI citations does not mean that thin, SEO-farm-style list posts are making a comeback. The distinction matters.

The listicles that get cited share specific structural and substantive characteristics that separate them from low-quality enumerated content:

Each item carries a specific, verifiable claim. "Use structured data markup" is a citable item. "Make your content better" is not. AI engines are extracting claims they can reproduce with confidence. Vague items that require interpretation are less extractable than specific ones that stand alone.

The list has a clear organizing principle. "7 reasons X matters" and "the 5 most cited content formats in AI search" have explicit logic. Arbitrary collections of loosely related points are harder for AI to cite because the relationship between items is ambiguous.

Items are substantive enough to support the claim in the headline. A listicle that promises "10 strategies for improving AI search visibility" and delivers 10 one-sentence items is not the same as one that delivers 10 items each with two to three sentences of supporting context. The latter is more citable because each item contains enough information to anchor a claim.

The overall piece has genuine editorial value. AI engines are not simply pattern-matching on structure. They are also evaluating whether the content is worth citing. A well-structured listicle on a topic where the brand has genuine expertise will outperform a well-structured listicle that aggregates obvious information from other sources.

The Gap Most Marketing Teams Have Created

When content teams deprioritized listicles in response to Google quality signals, they created an asymmetry that now works against them in AI search.

Consider the typical content library of a B2B SaaS marketing team in 2026. It likely contains a handful of comprehensive guides, several pillar pages, a library of blog posts covering industry topics, and a set of comparison pages built for SEO. It probably contains very few listicles and those that exist are likely older, lower-priority pieces that haven't been updated.

That content library was built for a ranking environment that is no longer the dominant one. Pillar pages and comprehensive guides are valuable assets, but they are not what AI engines cite most often. The format that AI engines cite most often is the one most teams have the thinnest inventory of.

Closing this gap is not a matter of abandoning depth-first content. It is a matter of recognizing that listicles and authoritative long-form content serve different purposes in a world where AI search and traditional search coexist. Long-form content wins keyword rankings and establishes topical authority. Listicles get cited.

A content strategy that optimizes for only one of these outcomes is leaving significant AI search visibility on the table.

How to Build Listicles That Actually Get Cited

The structural requirements for AI-citable listicles are distinct from those optimized purely for traditional search. Marketing teams that understand the difference will produce content that performs across both channels.

Lead with the most specific item, not the most obvious one. AI engines favor content that provides evidence of expertise rather than content that summarizes widely available information. If every item in your list is something a reader could have inferred without reading it, the list provides weak signal. The more specific and earned the items, the stronger the citation signal.

Use a numbered format over a bulleted one. Numbered lists provide explicit sequencing that AI engines find easier to extract and attribute. "The 7 most cited content formats in AI search" is more extractable than "content formats that perform well in AI search." The number in the headline is a structural commitment that makes the content more parseable.

Write item headers as standalone claims. Each item header should be citable on its own without the surrounding context. "Listicles account for 35.6% of AI citations" is a standalone citable claim. "Why structure matters" requires reading the item to understand what is being asserted. AI engines extract headers frequently make sure yours say something specific.

Include at least two to three sentences of supporting context per item. Bare lists items with no elaboration are less citable than items with enough context to anchor the claim. The supporting sentences do not need to be long. They need to be specific enough that the AI can understand why the item belongs on the list.

Target queries with list-shaped answers. Not every topic lends itself to a listicle. The format works best for queries that are inherently enumerative: best practices, common mistakes, tools and tactics, factors that influence an outcome, steps in a process. Identifying the queries your buyers are directing at AI engines and asking which of those queries have list-shaped answers is the starting point for an effective listicle strategy.

Refresh existing listicles rather than creating only new ones. Lantern's citation data shows that 62% of the most cited pages were published within the last six months. Freshness is a significant factor in AI citation rates. An existing listicle on a relevant topic, updated with current data and new items, will often outperform a new piece built from scratch. The combination of domain history and fresh content is particularly strong.

Integrating Listicles Into Your Content Calendar

For marketing teams restructuring their editorial approach around AI search performance, the practical question is one of balance.

The data supports a meaningful reallocation toward listicles without abandoning other formats. A content calendar that allocates 30 to 40 percent of editorial capacity to well-constructed listicles with the remainder split between long-form guides, comparison content, and topical blog posts would more closely mirror the citation distribution that AI engines actually produce.

The comparison content category deserves particular attention. At 9.3% of all AI citations, it is systematically underproduced relative to its citation rate. Brands that invest in "X vs Y" and "best tools for Z" listicles are targeting the queries with the highest purchase intent in their category. These posts pull double duty: they serve buyers in active evaluation mode, and they get cited in the AI answers those buyers receive.

Key Takeaways

- Listicles account for 35.6% of all AI citations across ChatGPT, Perplexity, Gemini, and Claude more than any other content format

- The structural properties that made listicles look thin to Google's quality algorithms discreteness, enumeration, brevity per point are the same properties that make them highly extractable for AI engines

- Perplexity has the highest listicle citation rate at 46.8%; ChatGPT diverges significantly with a 20.1% product page citation rate

- Comparison pages account for 9.3% of citations but represent a fraction of most teams' published content the category is systematically underproduced

- High-quality listicles that get cited share specific characteristics: standalone item headers, specific verifiable claims, and two to three sentences of supporting context per item

- Freshness matters: 62% of the most cited pages were published in the last six months updating existing listicles is as important as creating new ones

- The optimal content strategy for AI search visibility combines listicles (for citations) with long-form authoritative content (for topical authority and traditional search rankings)