AEO vs SEO in 2026: Key Differences and Why You Need Both Across Google, ChatGPT, and More

In 2026, “search” is no longer a single interface. People still use classic search engines, but they increasingly ask questions inside answer-first experiences, AI summaries in search results.

Across major search experiences, the user journey is increasingly answer-first and conversation-enabled, meaning the “result” a user consumes is often a synthesized answer, not a list of ten blue links. In Google’s own documentation, AI Overviews and AI Mode are positioned as AI features that “surface relevant links,” help users “get to the gist” faster, and support deeper exploration for complex comparisons; both may use “query fan-out” (multiple related searches) to assemble a response and a wider set of supporting links than classic search.

On the Bing side, Microsoft describes the same macro-arc: search begins with crawling and indexing, but increasingly includes “Answers” (summarized responses with links) and generative AI features that produce LLM-generated results on top of traditional results, with references to sources for verification.

For marketers, this changes two fundamentals at once:

First, visibility is being redistributed across surfaces. Semrush’s 2025 analysis (10M+ keywords, plus clickstream via Datos) shows AI Overviews triggered for a meaningful and changing share of queries—rising from 6.49% (Jan 2025) to nearly 25% (July 2025) and settling around 15.69% (Nov 2025), while also expanding beyond purely informational queries into commercial, transactional, and navigational intent over the year.

Second, click economics are shifting. A widely-cited Ahrefs study found that for position-one results, the presence of AI Overviews is associated with materially lower click-through rates in affected query sets; in their measurement frame, AI Overviews reduced position-one CTR by ~34.5% for “AI Overview keywords,” with illustrative year-over-year CTR comparisons (March 2024 vs March 2025).

These dynamics do not mean “SEO is dead.” In fact, Google explicitly states that “the best practices for SEO remain relevant for AI features” and that there are “no additional requirements” for appearing in AI Overviews or AI Mode beyond existing SEO fundamentals and technical eligibility (indexing + snippet eligibility).

They do, however, mean the optimization problem is now two-layered: you’re optimizing for ranking and retrieval and for selection into answers.

Definitions that matter in 2026

SEO (Search Engine Optimization) remains the discipline of improving a site so search engines can crawl, index, understand, and rank it, “the process of increasing the visibility of website pages on search engines in order to attract more relevant traffic,” in Google’s own phrasing.

AEO (Answer Engine Optimization) is a newer practitioner term describing optimization for answer-producing surfaces, featured snippets, knowledge-driven answer boxes, voice responses, and AI-generated summaries that cite sources. For example, defines AEO as formatting and creating content so AI answer engines can understand and surface it to answer questions (often via snippets, knowledge panels, or voice responses). similarly frames AEO as optimizing content so it appears as a direct answer (e.g., featured snippets / answer boxes) as search engines evolve toward direct answers.

In 2026, AEO is best understood as a specialization that sits on top of SEO:

- SEO optimizes your eligibility and competitiveness in the web index (crawl → index → rank).

- AEO optimizes your selectability as an extractable, citable unit inside answer interfaces (snippets, AI summaries, conversational responses).

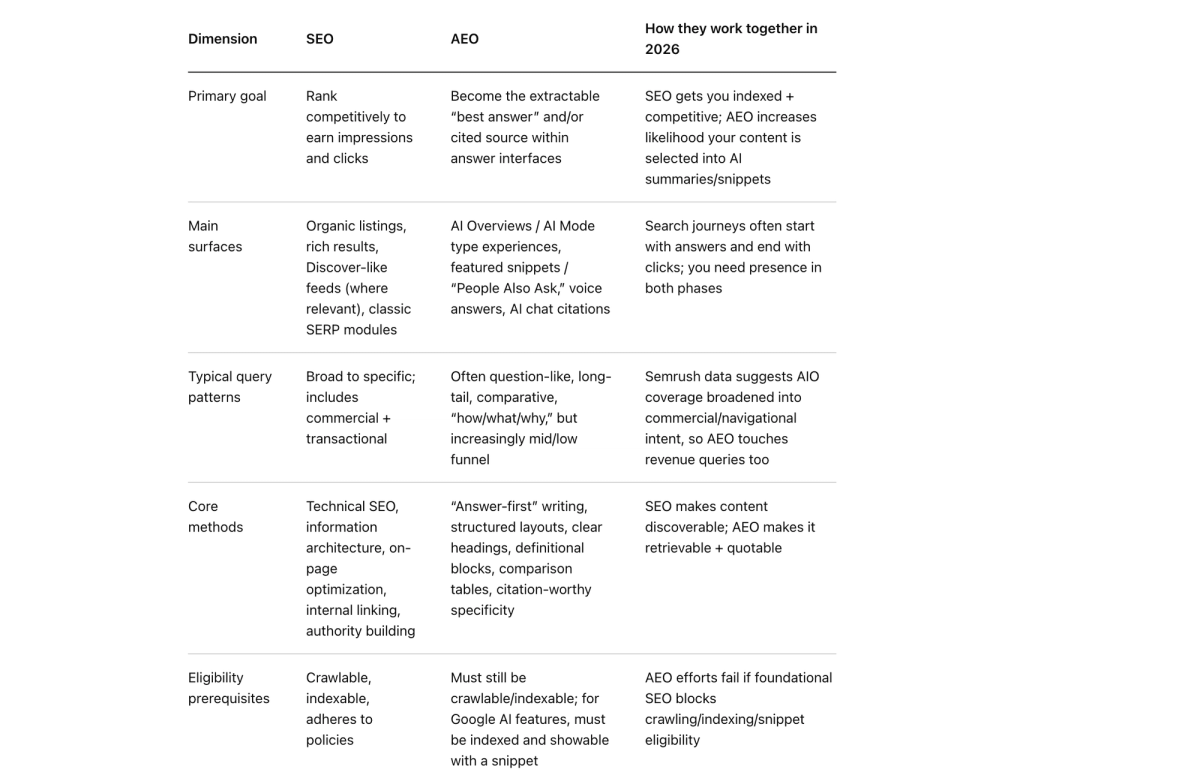

Key differences and how they complement

A practical way to frame the differences is to treat SEO and AEO as optimizing different “success states” in the same discovery stack.

- SEO is primarily about earning competitive placement in ranked results (and related classic surfaces), grounded in crawl/index technical health and relevance.

- AEO is primarily about being used as the answer, selected, summarized, and cited, often in experiences where the user may not click immediately.

A key 2026 nuance is that, at least for Google’s AI features, AEO is not “a separate compliance checklist.” Google states AI features have no additional technical requirements beyond being indexed and eligible to show a snippet, and that the same foundational SEO best practices apply. This makes AEO less about “hacking a new algorithm” and more about packaging your expertise in answer-ready units while maintaining the SEO foundation that gets you into the candidate set.

Comparison table: AEO vs SEO in 2026

What to measure in an AEO+SEO world

The biggest operational trap in 2026 is measuring “only what you can easily segment.” Some AI-driven visibility is now blended into familiar tools:

Google states that sites appearing in AI features (AI Overviews / AI Mode) are included in overall search traffic in Search Console, reported in the Performance report under the “Web” search type, without implying a separate new reporting interface for every AI surface.

This pushes teams toward a measurement model that combines (a) classic SEO metrics, (b) AI-answer presence metrics, and (c) on-site engagement quality.

A pragmatic KPI stack for 2026 looks like this:

Visibility (SEO layer)

Impressions, average position, click share, and query/page growth remain baseline indicators of index-level competitiveness.

Visibility (AEO layer)

AEO is less about rank alone and more about “being surfaced, trusted, and considered” within answers. Microsoft’s guidance is explicit: brands should track impressions, placement in AI answers, and citations to understand where content is being surfaced even before a visit occurs.

Engagement quality (post-click)

This is where some of the best 2025–2026 evidence for “why AEO matters” shows up. A Microsoft Clarity study analyzing data from 1,200+ publisher/news sites reported AI-driven platform traffic growth (+155.6% in their eight-month view) while remaining under 1% of overall traffic, and presented materially higher conversion rates for certain conversion types compared with traditional channels in their dataset.

Separately, Microsoft Advertising reported that Copilot users drove higher ad CTRs and stronger conversion rates vs. traditional search in their measured context, while also shortening customer journeys.

Volatility / stability (AEO reality check)

Unlike classic rankings, answer outputs can be more volatile. Ahrefs reported that AI Overview content changed ~70% of the time between observations and that citations changed substantially as well (nearly half of cited sources different between consecutive responses in their measurement). This means AEO measurement should emphasize trend-based presence across topics, not “we were cited once, we’re done.”

Why you need both in 2026

The case for “both” is not philosophical, it’s mechanical, behavioral, and financial.

You need SEO because it increasingly determines your eligibility to be used in answers.

Google’s AI feature documentation is clear that eligibility for AI Overviews / AI Mode depends on being indexed and eligible to show a snippet (no extra technical requirements). Empirically, Ahrefs reports that 76% of AI Overview citations are pulled from pages ranking in Google’s top 10 organic results—suggesting a strong (not perfect) dependency between SEO performance and AEO visibility.

Inference: treat SEO as the “candidate generation” step, with AEO improving the odds you’re selected from the candidate set.

You need AEO because ranking no longer guarantees attention or clicks.

Ahrefs’ CTR analysis illustrates that even when you hold position one, click behavior can be depressed on query sets where AI Overviews appear. Semrush’s analysis suggests AI Overviews expanded into more commercial and navigational intent over 2025, raising the likelihood that high-value queries are influenced by answer interfaces, meaning “AEO is only for top-of-funnel” is no longer a safe assumption.

You need AEO+SEO together to manage the new funnel shape: pre-click influence → later click → higher-intent conversion.

Microsoft’s Bing webmaster guidance argues that AI search shifts engagement upstream and that the most valuable signals are increasingly connected to visibility in AI answers and citations, with clicks happening later and with stronger intent. Supporting data from Microsoft Clarity indicates that AI-referred traffic can convert at materially higher rates for certain goals, even while remaining a small share of total traffic, indicating that “low volume” can still be “high value.”

Implementation playbook for marketers and content teams

AEO and SEO shouldn’t become two competing roadmaps. In 2026, the most resilient programs treat AEO as a content and measurement extension of SEO, not a separate universe. Google explicitly frames AI feature inclusion as following foundational SEO best practices and meeting technical requirements and policies.

Establish non-negotiable foundations

Start by removing the reasons your content never makes it into any candidate set:

Ensure pages are crawlable and indexable, because ranking and AI-feature eligibility rely on indexing.

Avoid policy violations and scaled low-value publishing. Google’s spam policies explicitly call out “scaled content abuse” and clarify that generating many pages (including with generative AI) primarily to manipulate rankings violates policy.

Google’s 2024 spam updates emphasize stronger enforcement against low-quality, unoriginal content and scaled abuse.

Use structured data carefully: Google’s structured data guidelines emphasize that structured data enables eligibility but does not guarantee rich results, and that eligibility can be lost via manual action without directly affecting web ranking.

Build “answer targets” into your content system

AEO succeeds when content teams design pages with extractable units:

Write and structure content so a definition, recommendation, or step sequence can be lifted cleanly into a snippet or AI summary. This is aligned with how featured snippets work (Google chooses what makes a good featured snippet; you cannot “mark your page as a featured snippet”).

Treat FAQPage schema as a selective tool, not a core tactic for SERP real estate. After Google’s limitation of FAQ rich results (government/health only) and deprecation of HowTo rich results, AEO programs should prioritize content clarity over schema-as-a-hack.

Make trust legible to humans and machines

Answer engines are aggressively risk-managed. Your content has to look reliable at a glance.

Google’s rater guidelines emphasize E‑E‑A‑T with Trust as the core: raters are instructed to consider whether content is accurate, honest, safe, and reliable; experience, expertise, and authoritativeness support the assessment of trust.

Operationally, this means content teams should standardize a few visible trust patterns (especially on pages that can trigger AI summaries): clear sourcing, explicit update dates when relevant, author/brand accountability, and “what we know vs what varies” sections for nuanced topics.

Extend beyond your site when the engine favors third-party signals

One of the sharpest differences between classic SEO and generative answer systems is source mix. A 2025 arXiv study reports that AI search systems can exhibit an “overwhelming bias” toward earned media versus brand-owned content in their experiments, and that behavior varies across AI services and phrasing changes.

This is why the most complete 2026 playbook pairs technical/content work with digital PR and partner ecosystems. For an industry illustration, recommends a dual-channel posture: maintain SEO fundamentals while also shaping external sources that AI platforms cite (their report discusses PR/media coverage and community/forum influence patterns).

Build reporting that connects pre-click visibility to revenue

Because AI features are counted within Search Console’s overall “Web” reporting, you need analyst workflows that detect changes even without a clean “AI Overviews” filter.

In practice, teams are converging on three complementary dashboards:

- Search Console performance diagnostics for query/page shifts and overall organic visibility.

- On-site engagement quality segmented by referrer patterns where possible (including AI assistant referrers), using behavioral analytics and conversion events.

- AI citation/mention tracking (specialized tools or internal sampling) that monitors volatility and topic-level share of voice—because citations can change frequently even when underlying semantic answers remain stable.

The way people discover brands has changed. ChatGPT, Perplexity, Google's AI Overviews, and Gemini now handle hundreds of millions of searches every day and they don't show ten blue links. They generate one comprehensive answer, citing only the most authoritative sources.

If your brand isn't cited in those AI-generated answers, you're invisible to a massive and growing segment of potential customers. That's where Generative Engine Optimization (GEO) comes in.

The AI Search Visibility Operating software

To scale measurement beyond manual spot checks, brands need purpose-built AI visibility platforms that can track, analyze, and optimize citations across all major generative engines systematically.

Lantern

Lantern is the most comprehensive AI search visibility and intelligence platform built specifically for brands competing in the GEO era. Unlike traditional SEO tools that track keyword rankings, Lantern gives you complete visibility into how AI engines discover, interpret, and cite your brand, turning AI search into a measurable, optimizable growth channel.

what Lantern provides:

- Real-Time AI Search Monitoring: Track exactly when, where, and how your brand is mentioned across ChatGPT, Perplexity, Gemini, and Google AI Overviews. Monitor the specific questions users are asking AI engines about your brand, product category, and competitors—so you know which queries to optimize for.

- Citation Discovery & Source Tracking: Uncover the exact domains and pages AI engines pull from when answering questions about your brand or industry. See which third-party websites are influencing how customers, partners, and investors hear about you—and identify citation gaps where your own content should be referenced instead.

- Competitive Benchmarking: Track your brand mentions side-by-side with competitors in real time. See which competitors are winning AI visibility for your target keywords, what content formats they're using, and where you have opportunities to outrank them in AI-generated answers.

- Sentiment & Representation Analysis: Go beyond mention counts to understand how AI engines describe your brand. Lantern analyzes tone, accuracy, and context to ensure AI platforms are representing your products, use cases, and positioning correctly—not confusing you with competitors or citing outdated information.

- Content Gap Analysis & Recommendations: Lantern reveals exactly which content gaps are costing you AI visibility and provides actionable, data-backed recommendations to close them—from answer-first formatting and E-E-A-T signal strengthening to semantic chunking and structured data implementation.

- AI-Attributed Traffic & Conversion Tracking: Measure the exact number of visitors originating from AI-driven search and tie AI visibility directly to pipeline, conversions, and revenue. Prove ROI by tracking which AI platforms, query types, and citation placements drive the highest-quality leads.

- Technical AI Optimization Audits: Ensure your site is fully optimized for AI crawlers with automated checks for structured data, crawlability, semantic HTML, Core Web Vitals, and robots.txt configuration—so AI engines can easily discover, parse, and cite your content.

Other AI visibility tools in the market include Semrush AI Visibility Toolkit (tracks brand mentions in AI answers), BrightEdge Real Rank (monitors citations in Google AI Overviews), and Peec AI (fast visibility spot checks). While these tools provide basic monitoring, they lack Lantern's depth in citation discovery, competitive intelligence, and actionable optimization workflows.

Most brands pair Lantern with Google Analytics to create a complete AI visibility measurement stack tracking everything from initial AI citations through to on-site conversions and revenue attribution.

Your Next Steps

Get a free AI visibility report to see where and how your brand appears across ChatGPT, Perplexity, Gemini, and Google AI Overviews. Discover your current mention rate, citation gaps, and competitor positioning.

👉 Run your free visibility report at asklantern.com/visibility

The future of search is here. The question is: will your brand be cited?